This project came from a class project for one of my graduate classes: "Algorithmic Robotics". The class focuses on algorithmic approaches to problems in the field of robotics. My specific project focuses on the localization of a mobile robot in a controlled environment. For almost any autonomous, mobile robot, localization (knowing where it is located) is a key problem to overcome in order to accomplish the tasks it was designed to do. The classic example of this would be a Roomba that needs to know where it is and where it has been at all times in order to determine if it has covered the entire floor.

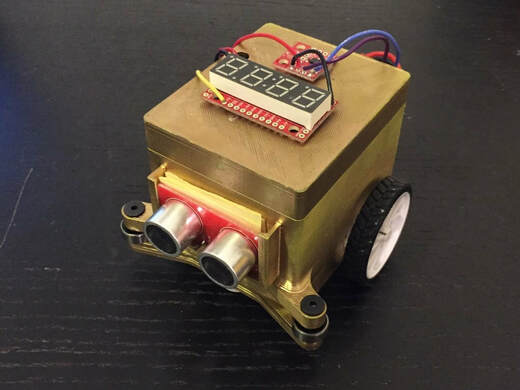

While Roombas cost hundreds of dollars and use very fancy sensors to achieve this goal, I had only one month to complete my project on a graduate student budget, so some simplifications were necessary. I already had an arduino based mobile robot with an ultrasonic sensor from my glove-controlled maze-solving robot, that I wanted to use to save time and money. The ultrasonic sensor would be my main source of information in scanning the environment. Going back to the Roomba corollary, the technology that iRobot uses is LIDAR based, which means it uses a scanning laser that spins in a circle to collect distance measurements in a circle. Because my ultrasonic sensor only senses distance in the direction it is pointing, I would need a way to control my turning when I scanned the environment to be able to control what direction I was attempting to measure. My solution to this was to use a 9-axis IMU (internal measurement unit). This is a single sensor that integrates an accelerometor, magnetometer, and gyrometer into one. For this project, I used the magnetometer (fancy compass) to sense the magnetic north and use it as my reference when turning to be able to make measurements in specific directions. These two sensors were added to the robot in addition to a serial seven segment display that would give visual feedback of the results of the localization algorithm.

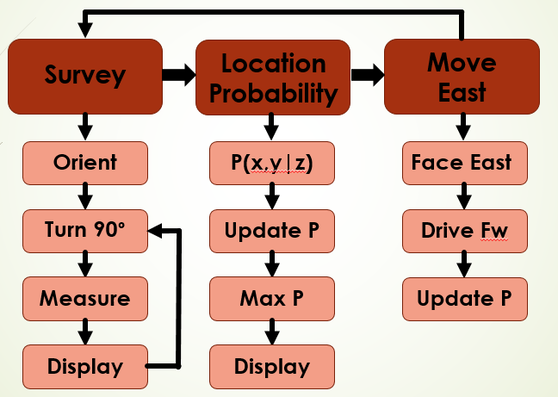

The next step was to generate the alrogithm. This process started by integrating all of the sensors and motor controllers I had connected to my arduino to make sure that those were all functioning properly before getting into the algorithmic side of the programming. From there it was mainly a process of breaking down the filter process into subfunctions that could each be individually debugged. A diagram of the overall flow of the algorithm can be seen below.

RSS Feed

RSS Feed